By Carolyn B. Drake, MD, MPH

By Carolyn B. Drake, MD, MPH

Peer Reviewed

News:

In the fortnight since Donald Trump was inaugurated as the 45th president of the United States, we’ve seen a flurry of political activity as the new president …

By Carolyn B. Drake, MD, MPH

By Carolyn B. Drake, MD, MPH

Peer Reviewed

News:

In the fortnight since Donald Trump was inaugurated as the 45th president of the United States, we’ve seen a flurry of political activity as the new president …

By Samantha K. Newman, MD

By Samantha K. Newman, MD

Peer Reviewed

As Melania and Barron Trump are (not) settling into the White House, our new president spent Friday afternoon issuing an executive order that bans individuals from …

By Calvin Ngai, MD

Peer Reviewed

In former President Barack Obama’s last farewell speech, he asked all fellow Americans to continue to believe in our ability to create change. This past weekend, the day after Donald J. Trump was sworn into the presidential office and our country bid one last farewell to …

By Scott Butler, MDÂ

By Scott Butler, MDÂ

Peer Reviewed

A presidential goodbye. A contentious press conference. A salacious dossier from a British spy. It’s been quite a week.

As Republican legislators transition from …

By: Nancyanne Schmidt, MD

By: Nancyanne Schmidt, MD

Peer Reviewed

This week, the intelligence report commissioned by President Obama on suspected hacking during the recent presidential election revealed that Russian President Vladimir …

By Derek Moriyama, MD

By Derek Moriyama, MD

Peer ReviewedÂ

It is official. The members of the electoral college have cast their votes and Donald Trump will be the next President of the United …

By B. Corbett Walsh, MD

By B. Corbett Walsh, MD

Peer Reviewed

As many of us reflect on the festivities that occurred during the Department of Medicine Holiday Party this past weekend, all can agree that winter is not only coming, but that it has arrived. Earlier …

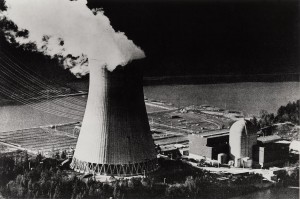

By Shriram Alapaty, MD

By Shriram Alapaty, MD

Peer Reviewed

This week, America’s president-elect Donald Trump continued to defy expectations by selecting Mr. Scott Pruitt, a close ally of the fossil fuel industry, to head …