How to Counsel a Patient on Prostate Cancer Screening in 5 Minutes

How to Counsel a Patient on Prostate Cancer Screening in 5 Minutes

By Caprice Cadacio, MD

Faculty Peer Reviewed

A good screening test is relatively inexpensive and noninvasive. In …

How to Counsel a Patient on Prostate Cancer Screening in 5 Minutes

How to Counsel a Patient on Prostate Cancer Screening in 5 Minutes

By Caprice Cadacio, MD

Faculty Peer Reviewed

A good screening test is relatively inexpensive and noninvasive. In …

By Ami Jhaveri, PGY-3

By Ami Jhaveri, PGY-3

Faculty Peer Reviewed

Clinical Case:

K.M. is a 61-year-old woman with hypertension diagnosed with non-Hodgkin’s lymphoma 1 month ago. Her only medication is hydrochlorothiazide. She …

By David Hormozdi, MD

By David Hormozdi, MD

The weather outside may be cooling off but the debate surrounding lung cancer screening is heating up once again as preliminary results released from The National …

By Jon Emile Kenny, MD

By Jon Emile Kenny, MD

Faculty Peer Reviewed

“You mean I’ve got cancer and my kidneys are failing, doc?†said my frail patient on the Bellevue oncology service shortly …

By Emily Slater

By Emily Slater

Faculty Peer Reviewed

Mr. R is a 46-year-old man with a past medical history of polycythemia vera on hydroxyurea and chronic hepatitis B and C who presented with acutely worsening …

By David Ecker, MD

By David Ecker, MD

Faculty Peer Reviewed

Over the last several decades, Westernized countries have become 24-hour societies. Approximately 21 million workers in the US are on non-standard work shifts, including …

David Shabtai

David Shabtai

Faculty Peer Reviewed

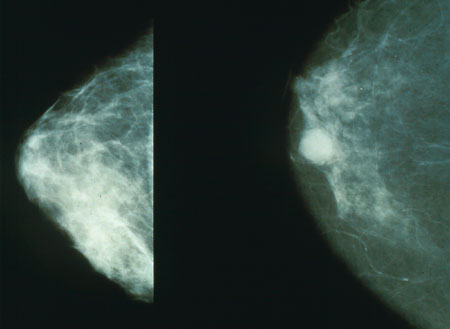

In a bold move, the U.S. Preventive Services Task Force recently changed their breast cancer screening guidelines – recommending beginning screening at age 50 and even then only …

Chief-of-service rounds is a new feature of Clinical Correlations. Â Here we summarize Bellevue Hospital’s Chief of Service Rounds moderated by the Chief of Medicine, Nate Link, MD. Â This multidisciplinary bimonthly conference focuses on …

Chief-of-service rounds is a new feature of Clinical Correlations. Â Here we summarize Bellevue Hospital’s Chief of Service Rounds moderated by the Chief of Medicine, Nate Link, MD. Â This multidisciplinary bimonthly conference focuses on …