By Jonah Klapholz                              Â

By Jonah Klapholz                              Â

Peer …

By Jonah Klapholz                              Â

By Jonah Klapholz                              Â

Peer …

By Mercedes Fissore-O’Leary

By Mercedes Fissore-O’Leary

Peer Reviewed

I.

It is his youngest’s birthday today. His oldest is in the military, like he was. Except he served in the navy, in Vietnam. He has a …

By Ian Jaffe

By Ian Jaffe

Peer Reviewed

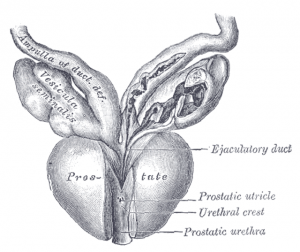

Recent headlines about increasing rates of metastatic prostate cancer have had many patients asking if they should be tested.1 This article will review the history and role of …

By Joshua Novack, MD

By Joshua Novack, MD

Peer Reviewed

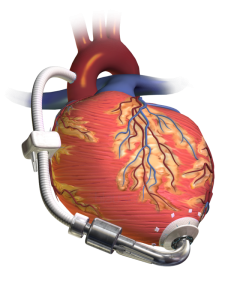

Case: 74 year old male with a history of heart failure with reduced ejection fraction (EF 20%) diagnosed 10 years ago comes in with subacute progressive lower …

By Raymond Barry

By Raymond Barry

Peer Reviewed

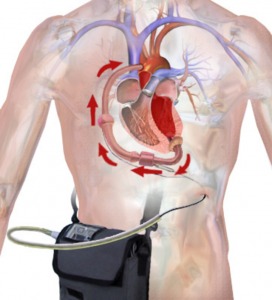

A 2020 report published by the American Heart Association (AHA) in conjunction with the National Institutes of Health (NIH) found that an estimated 6.2 million American adults had …

By: Michael Moore

By: Michael Moore

Peer Reviewed

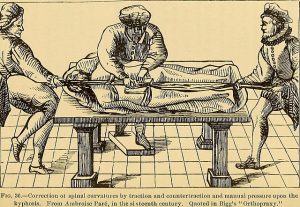

“Too many complex back surgeries are being performed and patients are suffering as a result†wrote National Public Radio health science journalist Joanne Silberner in her 2010 article …

By Kathryn Hockemeyer

By Kathryn Hockemeyer

Peer Reviewed

I caught up with a friend who works in environmental, social, and corporate governance investing during a lull in the COVID-19 pandemic. Seconds into the conversation, he asked, …

By Akshay N. Pulavarty MPH

By Akshay N. Pulavarty MPH

Peer Reviewed

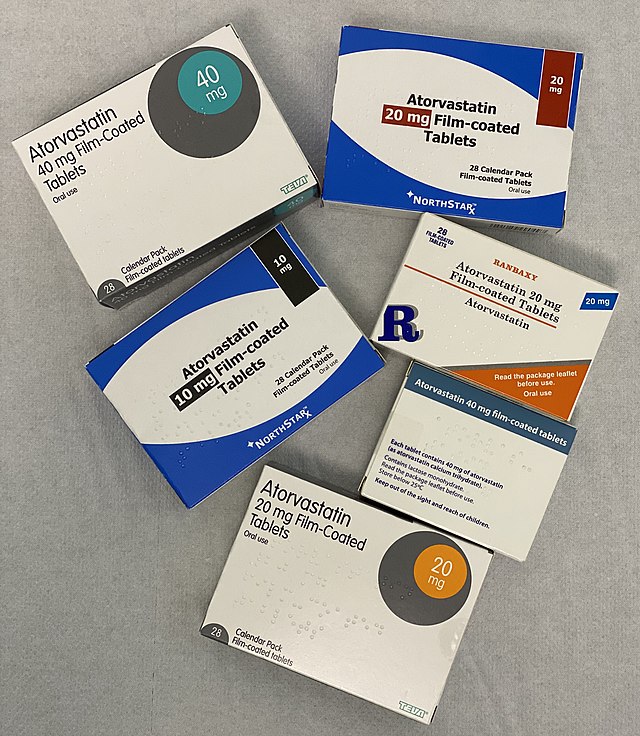

A quick look into the medicine cabinet of anyone sixty or older will likely reveal a statin. Primary prevention with high-intensity statins has substantially reduced the …