By Anna Hirsch

By Anna Hirsch

Peer Reviewed

The use of opioid medications for chronic low back pain, or for any chronic non-cancer pain complaint, is still a source of controversy …

By Anna Hirsch

By Anna Hirsch

Peer Reviewed

The use of opioid medications for chronic low back pain, or for any chronic non-cancer pain complaint, is still a source of controversy …

By Thatcher Heumann, MD

By Thatcher Heumann, MD

Peer Reviewed

BackgroundÂ

Direct Oral Anticoagulants (DOACs) are a class of medications consisting of the thrombin inhibitor, dabigatran (Pradaxa), and the factor Xa inhibitors, rivaroxaban (Xarelto), apixaban (Eliquis), and edoxaban …

By Nishanth Srivaths Iyengar, MD and David M. Oshinsky, MD

By Nishanth Srivaths Iyengar, MD and David M. Oshinsky, MD

Peer Reviewed

Federal agencies such as the Centers for Disease Control and Prevention (CDC) publish exhaustive public health guidelines and …

Dixon Yang, MD

Dixon Yang, MD

Peer Reviewed

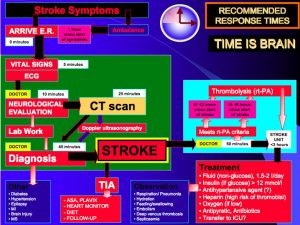

Case and Introduction

A 52-year-old right-handed woman with hypertension is brought in by ambulance after her daughter notices a sudden onset of nonsensical speech and trouble walking. On exam, …

By Joshua Novack

By Joshua Novack

Peer Reviewed

Patients often come into clinics on a grocery list of medications. Common prescriptions include lisinopril 20 mg, amlodipine 2.5 mg, metformin 500 mg, and aspirin 81 mg. One …

By Andrew Segoshi

By Andrew Segoshi

Peer Reviewed

If you live in New York, you’ve no doubt encountered the opioid epidemic sweeping the nation in the past decade. Whether it’s firsthand experience passing opioid users in the street congregating on St. Mark’s every summer, hearing …

By William Plowe

By William Plowe

Peer Reviewed

Metformin has been the first-line drug in type 2 diabetes for over a decade, but its possible benefit in type 1 diabetes (DM1) is still a matter of …

By Kevin Rezzadeh

By Kevin Rezzadeh

Peer Reviewed

Injuries associated with amateur boxing include facial lacerations, hand injuries, and bruised ribs.1 While many of the superficial wounds and bone fractures can completely …