By Rachel Kaplan Hoffmann, M.D., M.S.Ed., and Keith Hoffmann, J.D.

By Rachel Kaplan Hoffmann, M.D., M.S.Ed., and Keith Hoffmann, J.D.

Peer Reviewed

On December 6, 2013, a two-year-old boy living in southeastern Guinea became the first victim of the …

By Rachel Kaplan Hoffmann, M.D., M.S.Ed., and Keith Hoffmann, J.D.

By Rachel Kaplan Hoffmann, M.D., M.S.Ed., and Keith Hoffmann, J.D.

Peer Reviewed

On December 6, 2013, a two-year-old boy living in southeastern Guinea became the first victim of the …

By Nathan King

By Nathan King

Faculty Reviewed

Doctors are known to be some of the worst patients, and from personal experience I predict that medical students are not too far behind. That’s why when I finally found the time to take a proactive step …

By Luke O’Donnell, MD

By Luke O’Donnell, MD

Peer reviewed

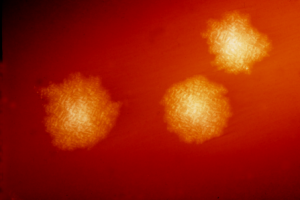

Once formidable diseases, pneumonia, bacteremia, and meningitis are all now considered “bread-and-butter” internal medicine. Streptococcus pneumoniae is one of the …

By Pritha Subramanyam

By Pritha Subramanyam

Peer Reviewed

Mrs. CS is a 66-year-old Indian female who presents for a cardiology follow-up. The patient has a history of mitral regurgitation secondary to …

By Theresa Sumberac, MD

By Theresa Sumberac, MD

Peer Reviewed

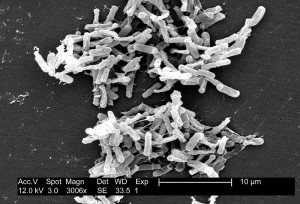

Antibiotic associated diarrhea is a common complication of antibiotic therapy, occurring in 5% to 39% of all patients receiving treatment. Nearly one third of these cases …

By Richard E. Greene, MD

By Richard E. Greene, MD

Peer Reviewed

In July of 2012, the FDA approved the use of Tenofovir-Emtricitabine (Truvada, a single blue pill) daily as Pre-Exposure Prophylaxis …

By Aaron Smith, MD

By Aaron Smith, MD

Peer Reviewed

It’s become a familiar site to travelers: airline passengers wearing respiratory masks to filter pathogens from the cabin air. To those not wearing masks, the …

By Aaron Smith, MD

By Aaron Smith, MD

Peer Reviewed

First introduced in the late 1980s, proton pump inhibitors (PPIs) have revolutionized the treatment of gastric acid-related disorders and have been described as a miracle drug …