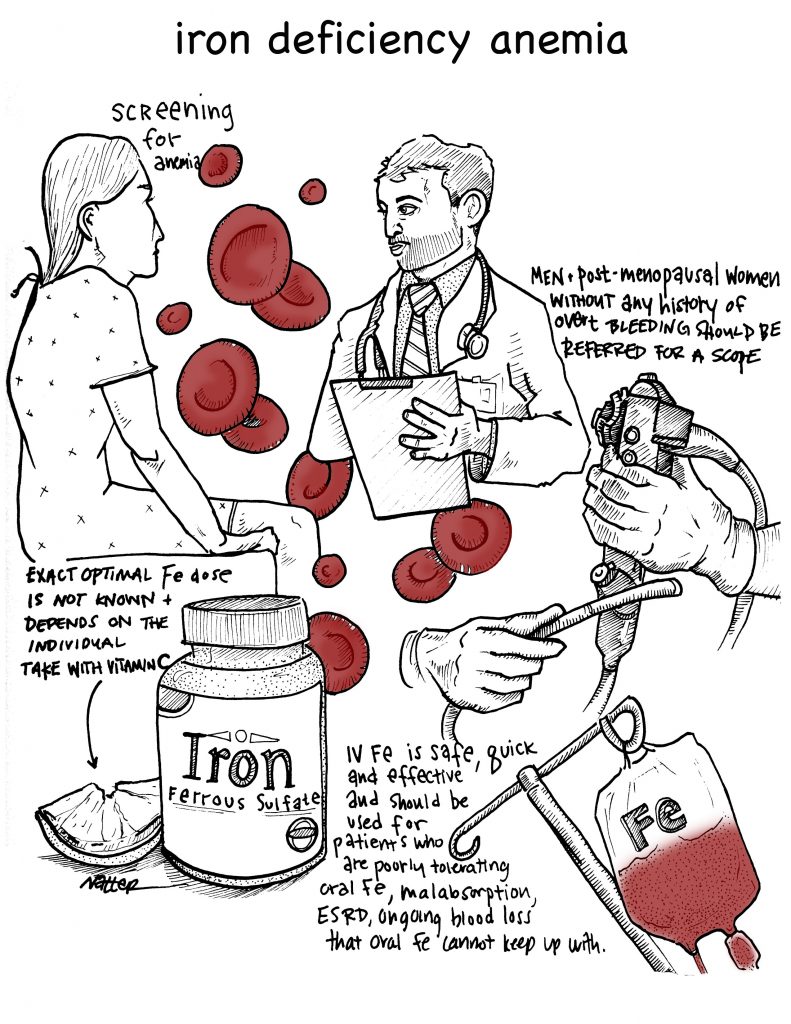

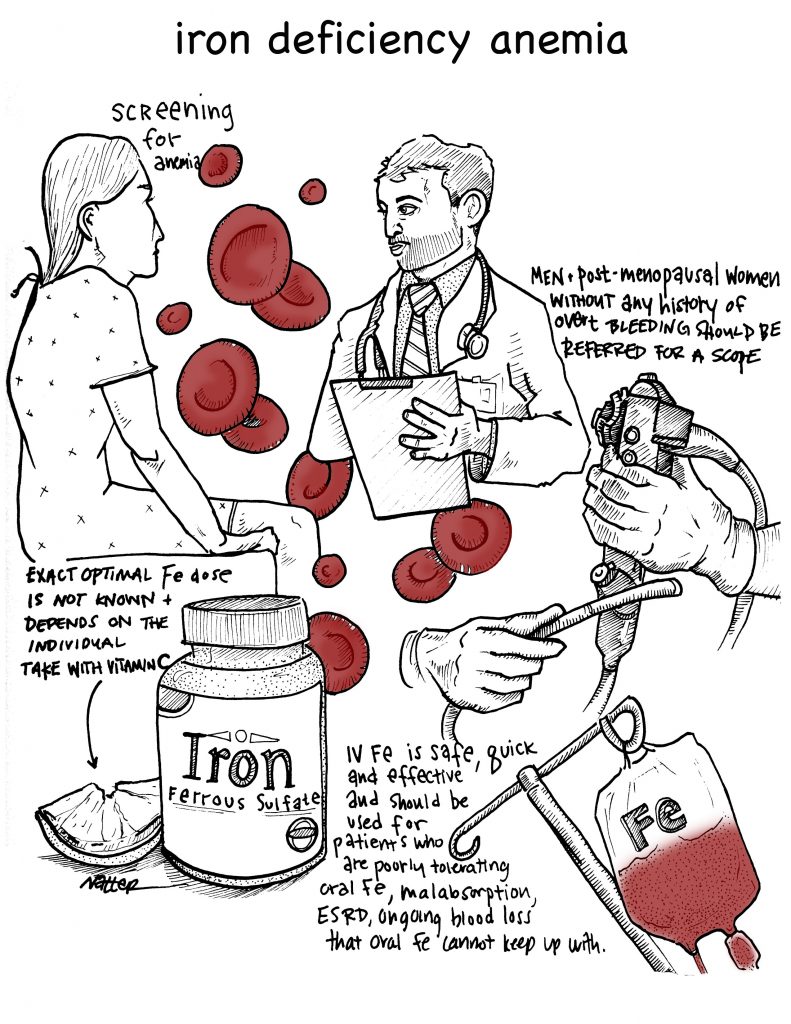

Listen to 5Â Pearls segment of Iron Deficiency Anemia! By Dr. Cary Blum MD, Marty Fried MD and Shreya P. Trivedi MD; Illustration by Mike Natter MD

Listen to 5Â Pearls segment of Iron Deficiency Anemia! By Dr. Cary Blum MD, Marty Fried MD and Shreya P. Trivedi MD; Illustration by Mike Natter MD

Time Stamps:

- Â Should patients be screened …

Listen to 5Â Pearls segment of Iron Deficiency Anemia! By Dr. Cary Blum MD, Marty Fried MD and Shreya P. Trivedi MD; Illustration by Mike Natter MD

Listen to 5Â Pearls segment of Iron Deficiency Anemia! By Dr. Cary Blum MD, Marty Fried MD and Shreya P. Trivedi MD; Illustration by Mike Natter MD

Time Stamps:

By Sara Stream, MD

Peer Reviewed

As resident physicians, we are taught to supplement serum potassium to a goal level of 4.0 mEq/L in all hospitalized patients. While the dangers of severe potassium abnormalities are well established, …

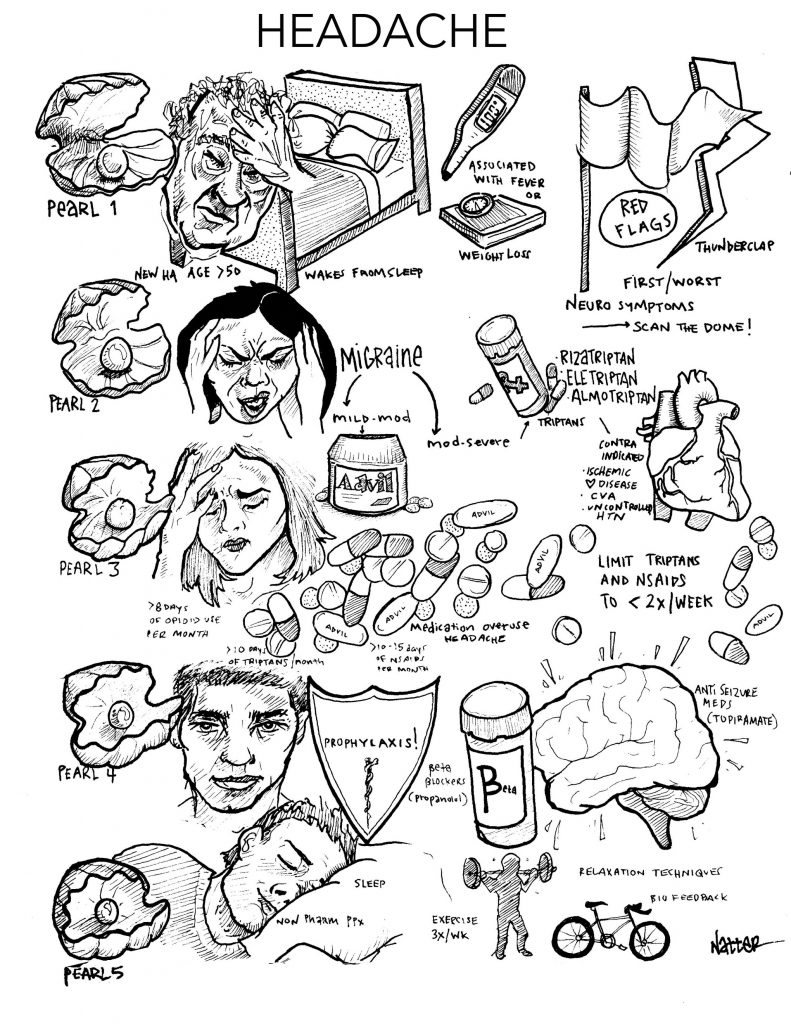

Listen to CORE IM’s first 5 Pearls segment on Headaches!

Time Stamps

By Helen Ma, MD

By Helen Ma, MD

Peer Reviewed

Our new Spotlight series uses case vignettes to explore diagnosis, pathophysiology, and management of a wide variety of diseases seen in the outpatient and inpatient settings. …

By Leonard Naymagon, MD

By Leonard Naymagon, MD

Peer Reviewed

Vaso-occlusive crisis (VOC), or pain crisis, is the most common clinical manifestation of sickle cell disease (SCD) and is responsible for the majority of emergency department (ED) visits …

By Karen McCloskey, MD

By Karen McCloskey, MD

Peer Reviewed

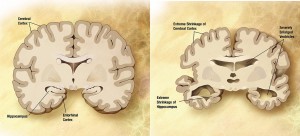

In May 2012, The U.S. Department of Health and Human Services unveiled the “National Plan to Address Alzheimer’s Disease,†in response to legislation signed by President …

By Eric Jeffrey Nisenbaum, MD

By Eric Jeffrey Nisenbaum, MD

Peer Reviewed

Mr. O is a 93-year-old man with a past medical history notable for severe Alzheimer’s dementia and amputation of the left upper extremity secondary …

By Maxine Wallis Stachel, MD

By Maxine Wallis Stachel, MD

Peer Reviewed

The Scale of the Problem

Despite decades of rigorous data collection, drug research, patient education and evidence-based practice, ischemic heart disease (IHD) and congestive heart failure (CHF) remain …